en

Please fill in your name

Mobile phone format error

Please enter the telephone

Please enter your company name

Please enter your company email

Please enter the data requirement

Successful submission! Thank you for your support.

Format error, Please fill in again

Confirm

The data requirement cannot be less than 5 words and cannot be pure numbers

https://www.datatang.com/

https://www.datatang.ai/

m.datatang.ai

SEE YOU IN CVPR 2022

From:Datatang Date:2022-06-13

The IEEE / CVF Computer Vision and Pattern Recognition Conference (CVPR) will be hold from 2022.6.19– 2022.6.24.

CVPR is the premier annual computer vision event comprising the main conference and several co-located workshops and courses. With its high quality and influence, it provides an exceptional value for students, academics, and industry researchers. Over the past years, there have been exciting innovations in the design of deep network for vision applications and AI algorism. This year, more than 2066 papers was accepted by CVPR and over 3000 people is attending this event.

As a leader in AI service provider and a silver sponsor of CVPR2022, we will be hosting a booth from June 21st through the 23rd at the CVPR Expo so come and stop by to say hello! Check out the map here to find us:

Also, don’t forget to participate our datasets give away, we are happy to be part of CVPR2022, to celebrate this event, we give away 400 copies of $20,000 worthy dataset for all AI Model Creators and Academic Researchers.

There are 5 datasets of 97% accuracy are included in the dataset

100 People 3D Living_Face & Anti_Spoofing Dataset

Description:

This dataset is composed of 100 people, 168 images for each person, 50 males, 50 females; various facial expressions, facial postures, anti-spoofing samples, multiple light conditions, multiple scenes are included, and annotated with label the person ID, race, gender, age, facial action, collecting scene, light condition

100 People 2D Living_Face &Anti_Spoofing Dataset

Description:

This dataset is composed of 100 people, 168 images for each person, 50 males, 50 females; various facial expressions, facial postures, anti-spoofing samples, multiple light conditions, multiple scenes are included, and annotated with the person ID, race, gender, age, facial action, collecting scene, light condition etc.

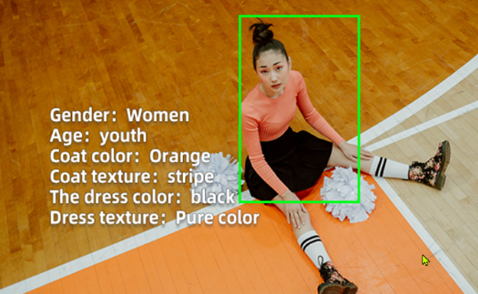

200 People Re-Id Dataset In Real Surveillance Scenes

Description:

This dataset is composed of 200 people, 15–22 images per person; including indoor and outdoor scenes (such as supermarket, mall and residential area, etc.) different ages, different time periods, different cameras, different human body orientations and postures, different ages collecting environment.

200 People Re-Id Collection Dataset in Surveillance Scenes

Description:

This dataset is composed of 200 people, the gender distribution is male and female, the age distribution is from children to the elderly, labeled for human body rectangular bounding boxes, 15 human body attributes; label the subject’s gender, age, race, collecting scenes, clothing categories, camera ID, camera height.

People 3D Liveness Detection Dataset

Description:

This dataset is composed of 5 people, 126 groups (282 images) for each person, all Chinese, covered multiple facial postures, 3D mask anti-spoofing samples, multiple light conditions, multiple scenes, labeled for the person ID, race, gender, age, facial action, anti-spoofing type, light condition

To participate, please scan the code below and fill out the form

Or click this link: https://forms.office.com/r/QkcVk91T3J

Recent

+1(626)594-5598

+1(626)594-5598